AISN #71: Cyberattacks & Datacenter Moratorium Bill

Also, updates on the Anthropic vs. Pentagon court case.

We’re Hiring. Opportunities at CAIS include: Head of Public Engagement, Principal, Special Projects, Program Manager, Operations Manager, and other roles. If you’re interested in working on reducing AI risk alongside a talented, mission-driven team, consider applying!

AI Software Infrastructure Cyberattacks

Recently, cyberattacks targeting the AI industry’s software infrastructure stole private information potentially worth billions of dollars and inserted backdoors into developers’ computers. Google Threat Intelligence Group reported that one of the largest cyberattacks in this wave was carried out by North Korea-linked hackers.

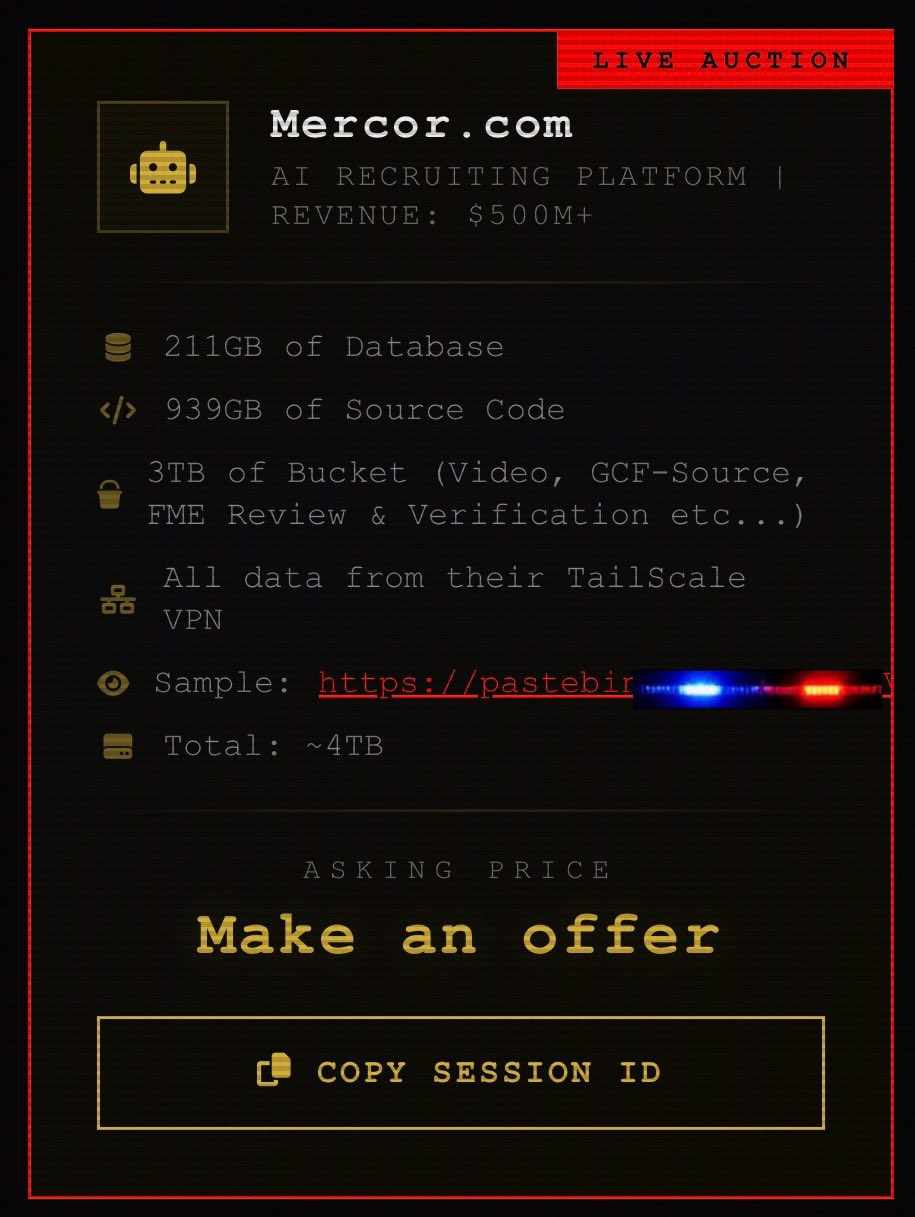

The stolen data may be worth billions. Hackers stole and auctioned private data from Mercor, an AI training data supplier for OpenAI and Anthropic which was recently valued at $10 billion. Mercor collects AI training data from a large number of experts, as well as highly sensitive personal and biometric data for identity verification. This attack not only comprises the data that Mercor sells, but also internal data that could be used to impersonate their hired experts. A person familiar with the situation stated that Mercor has paid the hackers’ requested ransom, although it remains unclear if the hackers intend to release or sell the data regardless.

AI amplifies cyber risks. LLMs dramatically lower the bar for executing successful cyberattacks, and continue to rapidly become more advanced. An experiment in 2025 showed LLMs performing real-world cyberoffense better than many human cyberoffense professionals. Anthropic recently announced Claude Mythos, a closed-access LLM that has found critical vulnerabilities in every major operating system and browser, significantly advancing AI cyberoffense. Additionally, AI cyberattackers can be copied many times, allowing for attacks on much broader sections of the AI software ecosystem for significantly lower costs than human labor.

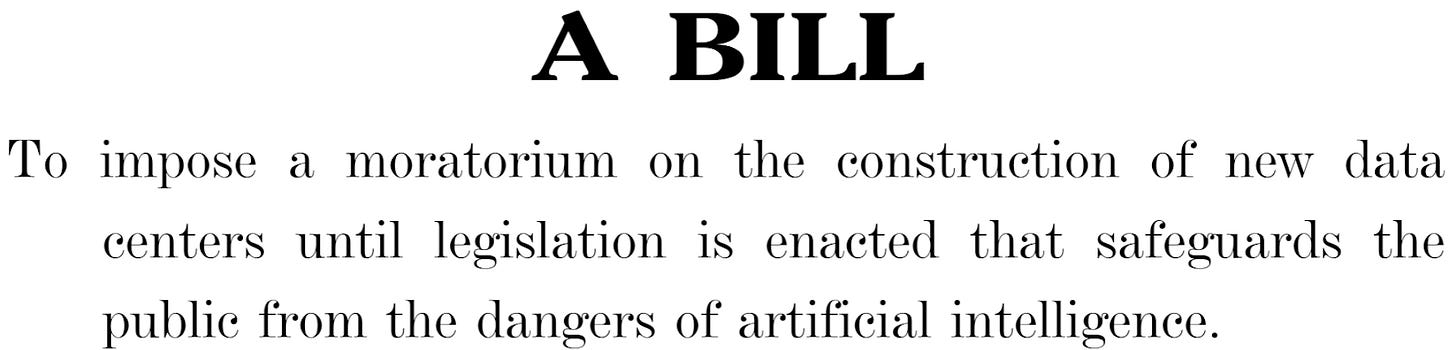

Datacenter Moratorium and Export Controls Bill

Bernie Sanders and Alexandria Ocasio-Cortez introduced a new bill to ban the construction of AI datacenters until several safety conditions have been met, and to prevent export to countries without “comparable” safety measures.

The bill bans datacenter construction until several new regulations have been passed. If the bill passes, the moratorium can only be removed if congress explicitly passes laws to remove the moratorium and satisfy the following conditions:

Federal pre-market review of AI products: The government must review and approve AI products before release, ensuring they’re “safe and effective” and don’t threaten health, privacy, civil rights, or the future of humanity.

Worker protections: A law must prevent job displacement and ensure that the wealth generated by AI/robotics is “shared with the people of the United States.”

Datacenter construction requirements: Any datacenters built after the moratorium must meet a series of economic and environmental reviews.

The bill acts as a temporary blanket ban on all AI chip exports. No country currently meets the bill’s datacenter requirements, meaning that the bill would ban all AI chip exports out of the US if it is passed. Additionally, the bill leaves several definitions up to interpretation by regulators, such as what constitutes “comparable” regulations in other countries.

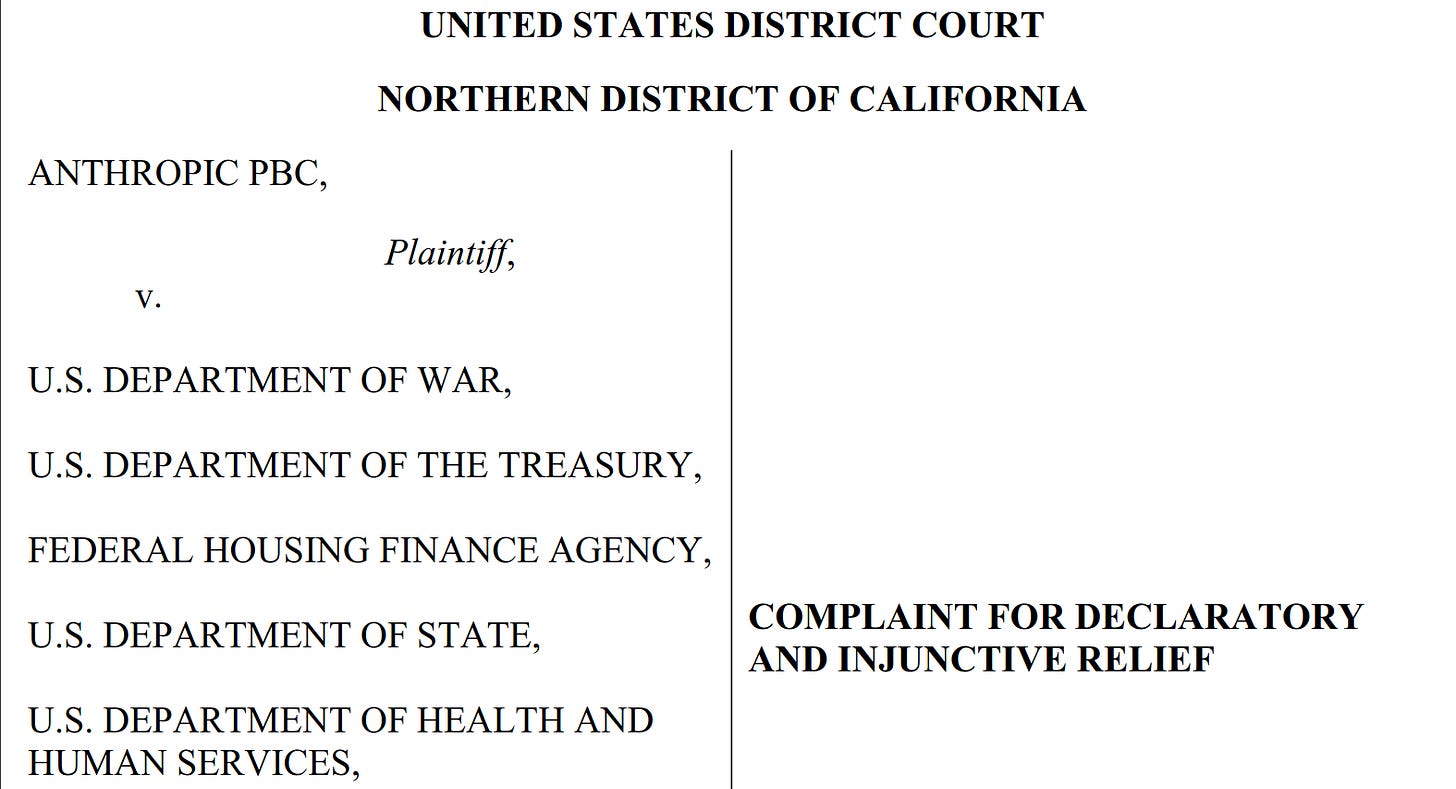

Anthropic v. Department of War Lawsuit

In early March, the Department of War (DoW) designated Anthropic a supply chain risk (SCR), restricting their ability to do business with military contractors and the military itself. The DoW used two federal statutes intended for adversaries and saboteurs, despite the fact that the DoW and Anthropic’s conflict emerged from a contract dispute.

Soon after, Anthropic challenged the designations in court, and Judge Rita Lin in the Northern District of California has issued a preliminary injunction to stop one of the two SCR designations until a permanent decision is reached. The other SCR designation is being challenged in the D.C. Circuit.

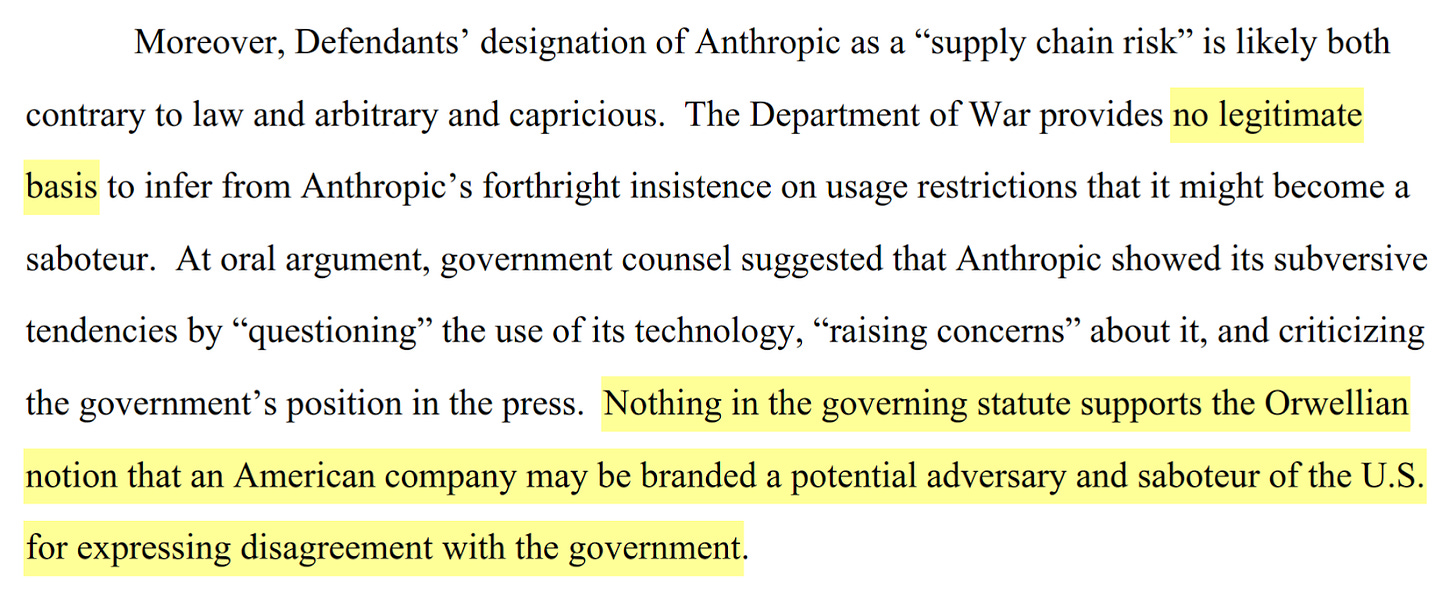

The court has taken a strong stance against the DoW. Judge Lin’s opinion (above) accompanying the preliminary injunction describes the DoW’s actions as “Orwellian,” saying that Anthropic was illegally “branded a potential adversary and saboteur of the U.S. for expressing disagreement with the government.”

The DoW’s legal arguments diverged significantly from public rhetoric. Despite the DoW’s statements about urgent “betrayal” from Anthropic, their legal case for the SCR designation centered around risk of future sabotage. Anthropic has argued that Trump’s public statements ordering the entire US government to “IMMEDIATELY CEASE all use of Anthropic’s technology,” as well as Hegseth’s X posts, had harmful effects beyond the official SCR designations.

The DoW’s case centers around the risk of sabotage from Anthropic. The DoW expressed concerns about risks from sabotaged AI systems, which “[have] weights and measures that are set by Anthropic.” The DoW further argued that this control would allow Anthropic to insert a backdoor or “kill switch” into the model. However, Judge Lin pushed back on the idea that this case was about sabotage at all: “It is not my role decide who’s right in that debate,” she said in court, “I see the question in this case as being a very different one, which is whether the government violated the law.”

Anthropic’s case in California is likely to succeed. In the judge’s opinion accompanying the preliminary injunction, she argued that Anthropic is likely to win the case for several independently sufficient reasons. For example, the DoW conceded in court that they did not follow the proper procedure for SCR designation, which requires notifying congress of “less intrusive measures that were considered and why they were not reasonably available.” However, the DC Circuit has not granted Anthropic’s request for an emergency stay. The DoW is currently appealing the preliminary injunction to the 9th Circuit Court of Appeals.

In Other News

Government

WIRED reports that Iran has threatened strikes on American AI datacenters in the Middle East because of AI’s use in military targeting in Iran.

The White House appointed 13 advisors on science, consisting primarily of AI and power infrastructure executives.

Industry

Anthropic announced Project Glasswing, plans to use the new Claude Mythos model to defend cyber infrastructure in preparation for more widespread AI cyberoffense capabilities.

Meta announced Muse Spark, a new closed-source model approaching the frontier.

Anthropic leaked the source code for Claude Code.

Google and Arcee AI released Gemma 4 and Trinity-Large-Thinking respectively, two new and competitive open-source LLMs.

Civil Society

The AI Doc, a new documentary about AI risks, is now in theaters.

Fox 59 reports that an attacker shot at the house of an Indianapolis city councilmember who voted to approve a local datacenter construction project, leaving a note saying “NO DATA CENTERS.”

OpenAI organized a coalition about promoting child safety in AI, claiming to partner with several child safety organizations that were unaware of OpenAI’s involvement.

If you’re reading this, you might also be interested in other work by the Center for AI Safety. You can find more on the CAIS website, the X account for CAIS, our paper on superintelligence strategy, our AI safety textbook and course, our AI dashboard, and AI Frontiers, a platform for expert commentary and analysis on the trajectory of AI. You can listen to the AI safety newsletter on Spotify or Apple Podcasts.