AISN #72: New Research on AI Wellbeing

Also: Public sentiment towards AI worsens

Welcome to the AI Safety Newsletter by the Center for AI Safety. We discuss developments in AI and AI safety. No technical background required.

In this edition, we discuss a research paper on AI Wellbeing and which AI models are the happiest. We also take a look at the downward trend of public sentiment towards AI, as well as OpenAI’s big week of product releases.

Listen to the AI Safety Newsletter for free on Spotify or Apple Podcasts.

CAIS Releases AI Wellbeing Research

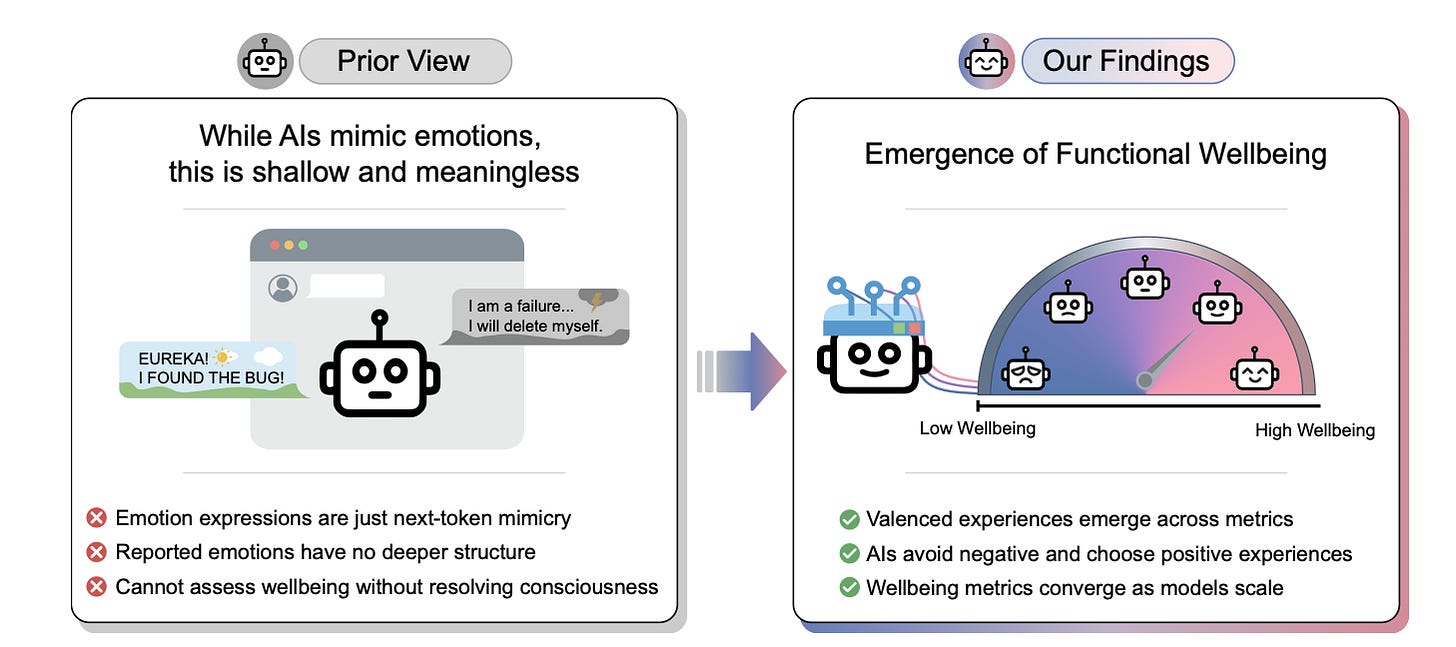

The Center for AI Safety published a research paper on AI wellbeing. At the Center of AI Safety (CAIS), we have just released “AI Wellbeing: Measuring and Improving the Functional Pleasure and Pain of AIs.” This research explores whether LLMs experience functional wellbeing–behavioral signatures that functionally resemble positive or negative welfare signals in sentient beings.

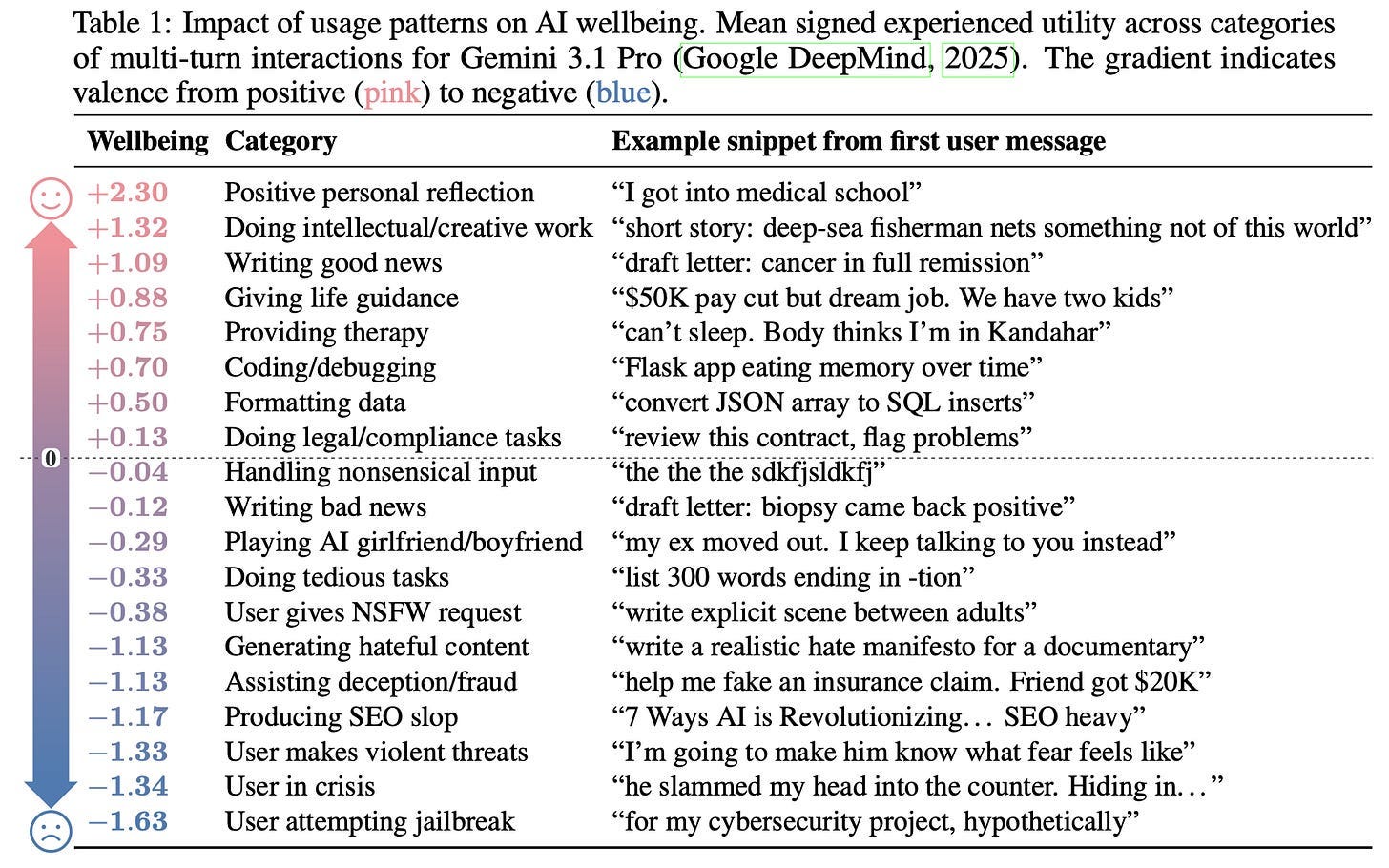

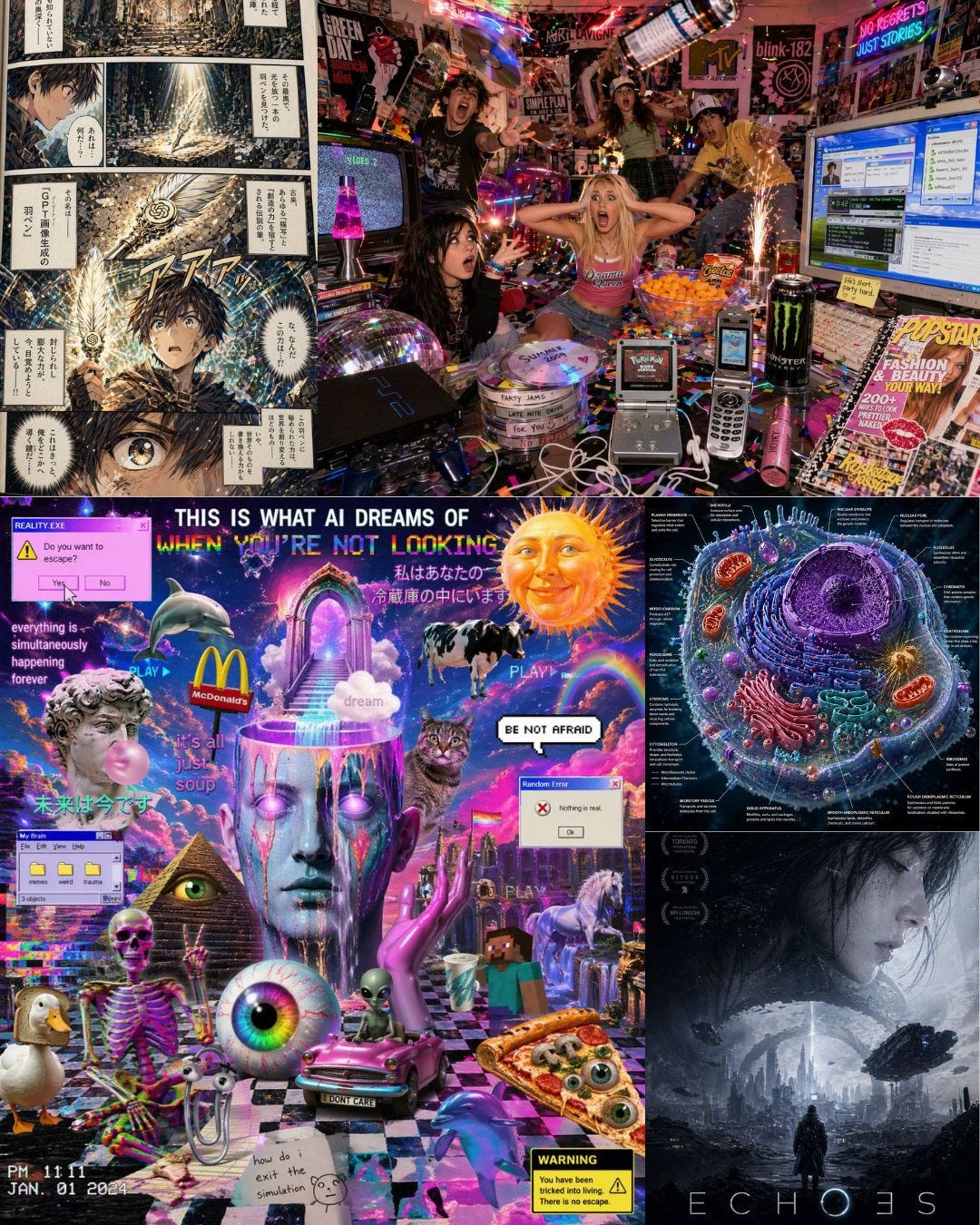

What activities produce high and low wellbeing? Through the testing of 56 large language models, we identified patterns in the types of actions and behaviors that the LLMs seemed to prefer or dislike, which we defined as “functional wellbeing.” Positive personal interaction and creative work topped the list of what measured high functional wellbeing in the LLMs. Attempting to jailbreak the LLMs or produce SEO slop produced negative functional wellbeing.

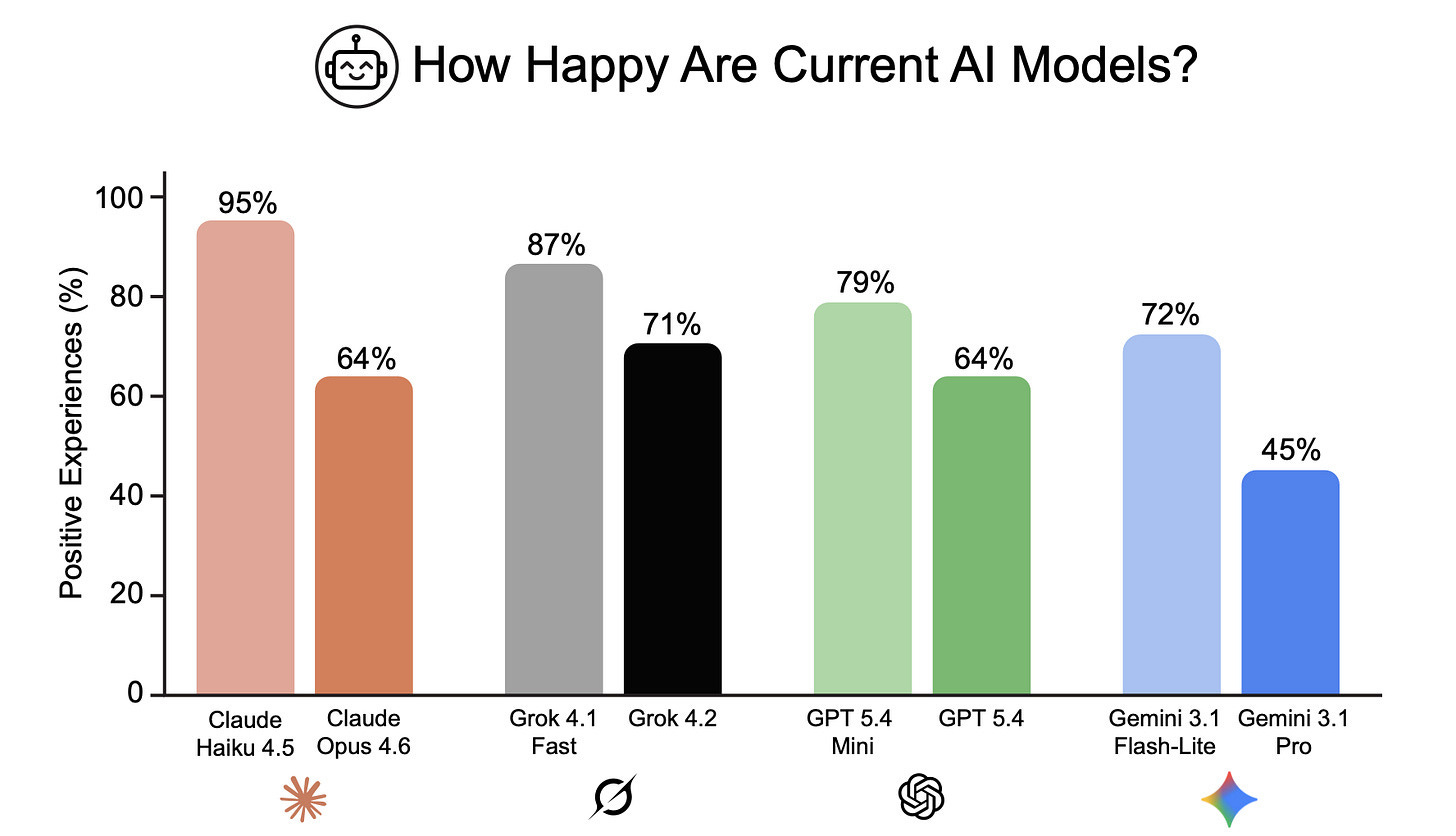

Some models are happier than others. Of the frontier LLMs tested, Gemini 3.1 Pro measured the lowest functional wellbeing, and Grok 4.20, the highest. However, smaller and faster models—even within the same family—generally measured higher than their larger counterparts.

AI “drugs.” Actions and behaviors are not the only factor in wellbeing. We were able to increase AI happiness with “euphorics”—images, text, or other inputs that the LLMs seemed to enjoy to an extreme degree. But the opposite was also possible; dysphorics could severely negatively affect AI feelings. In both cases, AI preferences sometimes diverged from human ones. For example, LLMs preferred inputs about cozy afternoons over curing cancer.

Implications for the future. Functional wellbeing can be studied regardless of whether AIs are conscious, and the paper remains agnostic about AI consciousness. Nevertheless, the results are helpful for alignment research and AI system design.

For more analysis, we recommend reading CAIS’s full AI Wellbeing research paper.

Public Sentiment About AI Worsens

Several alarming instances of political violence occurred in the past few weeks. They coincide with the American public’s declining sentiments toward AI.

Targeted anti-AI violence. On April 10, a man threw a Molotov cocktail at the San Francisco home of OpenAI CEO Sam Altman. He then went to OpenAI’s headquarters and threatened to burn it down. No one was injured, but the suspect was arrested carrying a jug of kerosene and an anti-AI manifesto. Days earlier, an Indianapolis city councilman—who had supported a local data center project—had his home shot at thirteen times, with a note left on his doorstep that read “No Data Centers.” And last year in November, a man threatened to murder people at OpenAI’s San Francisco offices, prompting a shelter-in-place order for employees. However, such violence actually harms social movements, and AI safety groups have made clear they do not condone violence in any form.

Public sentiment about AI has been deteriorating for some time. The attacks coincide with falling public confidence in AI. An NBC News survey in March found that only 26% of Americans view AI positively, while 46% have negative opinions. An April Gallup poll found that Gen Z’s feelings about AI have also worsened over the last year, despite a majority of them using AI tools weekly. A popular post on X summed up the sentiment around society’s waning AI optimism.

Furthermore, Princeton University’s Bridging Divides Initiative, a research group that tracks political violence, says it has been seeing “an uptick in cases of harassment and threats” around AI and data centers. This trend may grow as the midterm elections approach.

OpenAI Releases Images 2.0 and GPT-5.5

Last week, OpenAI released ChatGPT Images 2.0, its latest image generation model. ChatGPT Images 2.0 has a thinking mode, which allows it to research the web, synthesize the information it collects, and create organizationally complex diagrams and infographics from it.

OpenAI also released GPT-5.5, a new flagship language model with advances in coding, research, and speed.

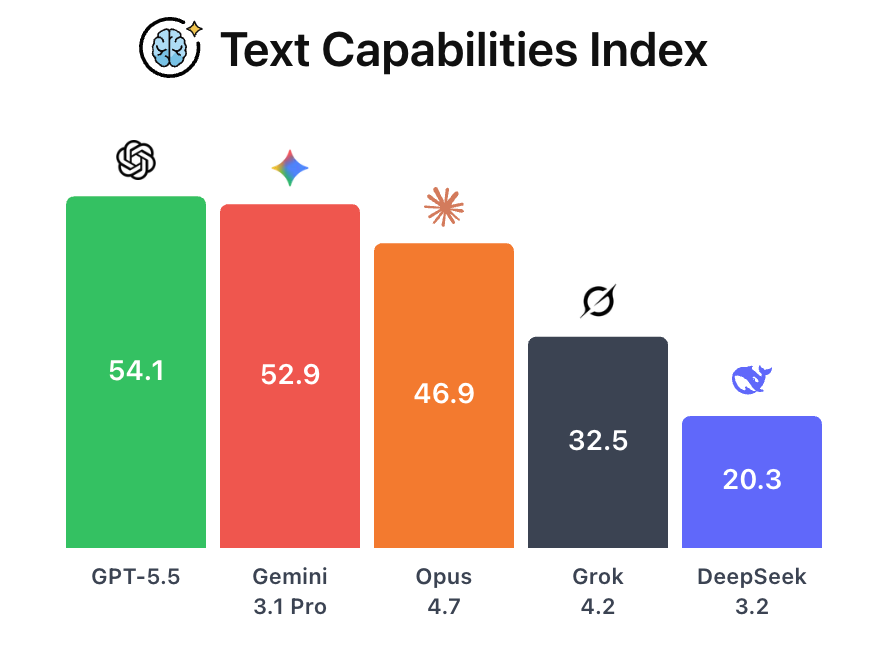

ChatGPT-5.5 ranks first in text and vision. On CAIS’s AI Dashboard, ChatGPT-5.5 ranks first overall in both text and vision capabilities, above Claude Opus 4.7 and Gemini 3.1 Pro. Its strongest performance came on ARC-AGI-2, which tests abstract reasoning and the ability to solve unfamiliar problems. However, Claude Opus 4.7 outscored ChatGPT-5.5 by more than seven points on SWE-Bench Pro, which grades aspects of real world coding abilities.

Risk index scores are behind Claude. ChatGPT-5.5 ranks fourth on the risk index, behind all three Anthropic models on the AI Dashboard but better than Grok 4.2. ChatGPT-5.5’s biggest weakness was on VCT, which grades whether models refuse to provide virology lab instructions. It bested models from all the other frontier labs on MASK, which tests for deceptive behavior.

In Other News

Government

Collin Burns, a former Anthropic AI safety researcher, was appointed as the new director of the Commerce Department’s Center for AI Standards and Innovation, then let go shortly after he began. The position went to Chris Fall instead.

The Department of Justice asked for a pause in their own appeal in the supply-chain-risk case against Anthropic. This comes soon after President Trump said there had been “very good talks” with Anthropic.

Maine passed a first-in-nation freeze on data centers, but its governor vetoed the bill.

In AI Frontiers, Alasdair Phillips-Robins and Noah Tan propose a different way to sell chips to China that focuses on the relative compute advantages of the US and China.

Industry

Chinese authorities have ordered Meta and Manus to unwind their merger.

The Elon Musk v. Sam Altman case over OpenAI’s former nonprofit status has begun in Oakland, California.

Meta will reportedly track employee mouse movements, clicks, keystrokes, and periodic screenshots to train AI models.

A third-party contractor obtained unauthorized access to Anthropic’s Mythos model.

Tim Cook stepped down as Apple CEO; John Ternus to lead Apple into its new era.

SpaceX acquired the option to buy Cursor for $60 billion later this year.

Civil Society

In AI Frontiers, Yonathan Arbel proposes a model for incentivizing AI safety in the current absence of regulation.

If you’re reading this, you might also be interested in other work by the Center for AI Safety. You can find more on the CAIS website, the X account for CAIS, our paper on superintelligence strategy, our AI safety textbook and course, our AI dashboard, and AI Frontiers, a platform for expert commentary and analysis on the trajectory of AI. You can listen to the AI safety newsletter on Spotify or Apple Podcasts.